I. The Current Situation

According to Datadog’s article on the state of security in AWS:

36% of organizations with at least one Amazon S3 bucket have it configured to be publicly readable. This is a significant cybersecurity risk, as publicly accessible S3 buckets can expose sensitive data to unauthorized individuals, leading to potential data breaches, data theft, and a host of compliance issues.

We could model the attack from a high-level point of view as follows:

In this article, we will focus on the recognition techniques used by attackers in part 1 of the figure above.

I.1 Why Cloud Services are a Common Target?

Cloud services like AWS S3 offer flexibility and scalability, but misconfigurations in these environments are unfortunately common. The ease of creating buckets often leads to security oversights, especially in fast-paced DevOps pipelines. Threat actors know this and actively search for open buckets across popular cloud services.

II. Enumeration Techniques

II.1 Google Dorking to Locate Buckets

Google Dorking utilizes advanced search queries to find hidden information on the internet. When it comes to S3 buckets, specific dorks can reveal buckets left exposed by inadvertent configurations.

Example Commands:

First command result example:

Search results will list web pages or direct links to S3 buckets. Verify the legitimacy of each link, as some may be outdated or reference non-existent buckets. For actual buckets, proceed to check the permissions and contents, ideally reporting any misconfigurations to the bucket owner.

II.2 Burp Suite Exploration

Burp Suite is a powerful tool for web application security pentesting. It can be used for S3 bucket reconnaissance by monitoring HTTP requests that contain bucket information.

Configure your browser to use Burp Suite as its proxy, then browse the target application. Burp Suite will automatically capture the traffic and analyze the sitemap generated by Burp for any S3 bucket links or headers.

Look for patterns such as:

- URLs containing “s3.amazonaws.com”

- Headers with “x-am-bucket”

For instance:

Also, the Burp plugin https://github.com/portswigger/aws-security-checks from the BApp Store can be really useful.

The traffic analysis capabilities of Burp Suite allow for detailed scrutiny of web applications and potential S3 bucket discovery inside indirect or sub calls.

II.3 Using the “ls s3” Command in Recon

If you have AWS CLI access (or emulate one via misconfigured credentials), the command:

aws s3 ls s3://bucket-name, can reveal:

- Existence of the bucket

- Directory structure

- Timestamps of files

This command is a stealthy and powerful way to verify if a bucket is live and if it leaks any sensitive data.

II.4 GitHub Recon Tools

There’s a treasure trove of S3 reconnaissance tools on GitHub. These tools range in functionality from scanning bucket names to checking for public accessibility and dumping contents.

Leveraging automated tools can vastly increase the efficiency and breadth of your reconnaissance. After running these tools, the next steps should involve assessing the identified buckets’ configurations, understanding the potential risks, and, if necessary, alerting the responsible parties.

II.5 Online Websites and SaaS Scanners

Online resources can streamline the S3 bucket discovery process. Nuclei templates, specifically, are predefined patterns used to detect common vulnerabilities, including misconfigured S3 buckets.

For instance you can use:

https://buckets.grayhatwarfare.com/

Tools like OSINT.sh and GrayHatWarfare are tailor-made to simplify the search process, pulling from pools of data that might take an individual researcher considerable time to amass.

What’s more, the existence of SaaS services accessible with just three clicks shows just how widespread this attack is these days.Hackers have even developed automated programs for scanning and collecting objects publicly exposed in S3 buckets

II.6 Regex Mastery

Mastering simple regex can be one of the most efficient ways to conduct S3 bucket reconnaissance. By chaining simple commands, you can create powerful searches.

Running Commands:

Here’s how to use regex with curl to extract S3 bucket URLs from JavaScript files:

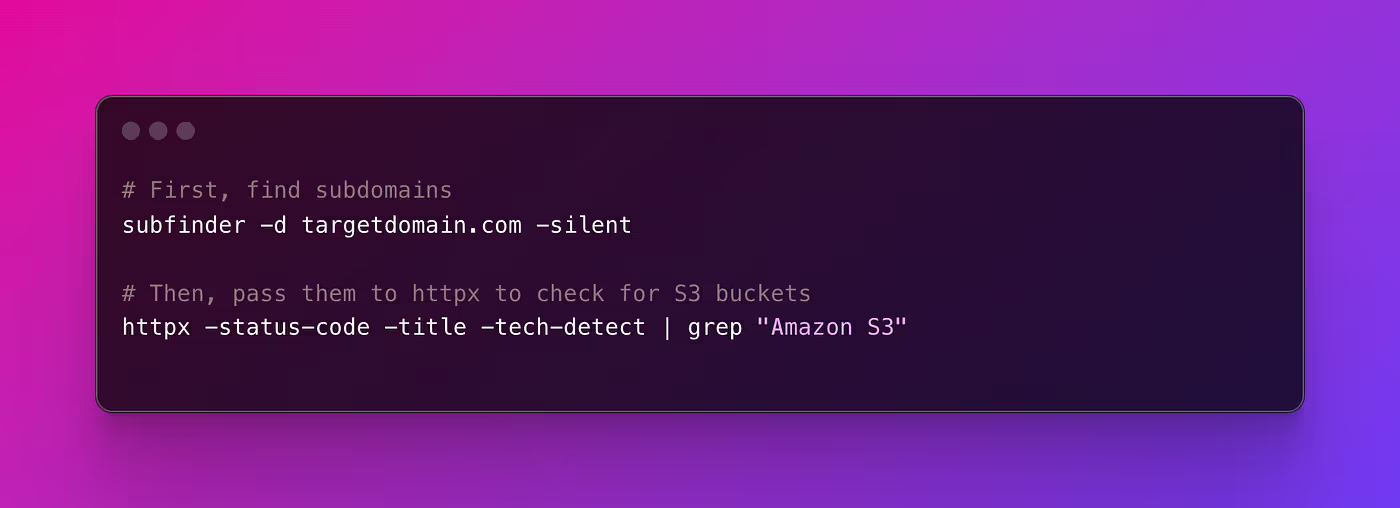

And for using subfinder and httpx:

The command-line outputs will typically provide you with raw URLs or status codes. A 200 status code on an S3 bucket URL, for example, indicates that the bucket is accessible. Further exploration of these command-line techniques offers granular control over the reconnaissance process and can be customized for specific scenarios. The output from these commands must be carefully analyzed to distinguish between normal bucket usage and potential security risks.

Conclusion

Navigating the complexities of AWS S3 Enumeration is crucial for identifying and securing misconfigured S3 buckets, which are potential gateways to sensitive data exposure.

Identifying these vulnerabilities is only the first step. Action must be taken to mitigate these risks, ensuring data remains secure against potential breaches. This is where Resonance Security steps in.

For companies looking to enhance their cloud security posture, we offer tailored pentests & audits designed to meet the unique challenges of securing your cloud infrastructure. Learn more about how we can support your cloud security needs at Resonance Security, or set up a quick call with our cloud security experts: https://calendly.com/resonance-security/30min

About the Author:

Ilan Abitbol is a cybersecurity expert and a member of the Resonance Security team, specializing in web2 security and cloud infrastructure audits.

.avif)

.png)